Maintenance, Cost, and Quality: The 4th – 6th Challenge in Web Data Acquisition Project

What do you need to include in your cost model to maintain a stream of high quality web data?

Hello again. We have three more challenges to cover: Maintaining, Cost, and Quality.

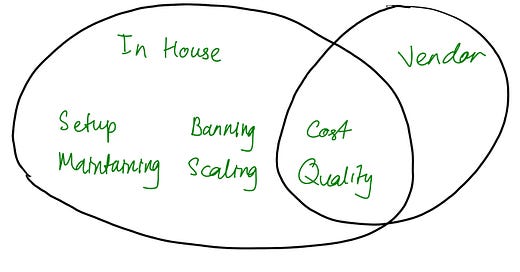

Let’s first do a quick recap. We have covered the first three challenges you can run into in web data acquisition projects: Setup, Banning, and Scaling.

Now, in order to properly talk about the challenge in Maintaining a data acquisition project, let’s first have a look at Cost and Quality.

Cost

It really takes a village to produce a data feed and to keep the data feed flowing.

Here are the cost components in a typical data acquisition project

Cost of IP (proxy) rental / subscription

Crawl engineers

QA engineers

Data engineers

Project managers

If you’re doing your data acquisition in-house, these are the things you need to account for in your cost model. When you’re buying rather than doing it in-house, these are the things you’re really paying for. When shopping for data vendors, it makes sense to try and understand your monthly cost for these items as a baseline for comparison. Data vendors usually charge monthly recurring fee for the subscription of the data — sometimes with a setup fee for the service and other deliverables.

It is also crucial to think not only of the cost you will incur but also in terms of opportunity cost — what potential revenue are you sacrificing by allocating your resources on these data acquisition activities rather than activities that are closer to the core of your business? How do you make sure you’re making a sensible trade-off?

Quality

What do we mean by quality? There are two main type of issues around quality in web data acquisition projects:

Quality of data

Quality of service

These two issues will inevitably occur in data acquisition projects once it hit a certain scale and stage. Let’s look at them closer.

Quality of data

Three types of data quality issues:

Item coverage. The level of record / item that’s collected against what’s expected. When you collected 90k records from 100k that’s on the website / data source, that means you have an item coverage of 90%.

Field coverage. The level that a record / item contains the field. E.g. when 15% of the 90k records don’t contain product price information that actually exist on the website / data source (failure in picking up that field at all), that’s 85% field coverage score for the product price field.

Field accuracy. The level that a record / item contains the field with the expected information. E.g. when 5% of the 90k records contain truncated product title information compared to what’s on the website / data source (failure in picking up that field correctly), that’s 95% field accuracy score for the product title field.

You see this is exactly the three parts you want to ensure you have early detection systems, scenario handling, and fallback plan for — that we talked about last week.

Quality of service

When working with DaaS (Data as a Service) or SaaS vendors for data acquisition toolings, there are certain SLAs the vendor will offer. These usually consist of

response time

resolution time

delivery guarantee

… and all the items in the quality of data aspect above.

Maintenance

Now, what are the different things we actually need to maintain in order to keep the data flowing?

Code: monitoring, fixes

Infrastructure: healthy pool of IPs, server uptime and health, storage, RAM, CPU

Team: hiring, training, retention, management

It pays to be proactive with our maintenance efforts. Do you know how you will track whether a datafeed is healthy? What’s your plan if something breaks? How do you know which part has broken?

Don’t step on the gas before putting your seatbelt on. Set up your monitors upfront.

Here are some common monitors to implement for each crawler in your project:

When there are less items collected than expected

When a certain deviation / drop in quantity of items, indicating an issue or something related to your business logic

When certain fields are not populated (extractor breakage)

Any runtime error — indicates bug or exception

Running and maintaining a web data acquisition project is even more complex than maintaining most software development and service delivery because in web data acquisition projects, we are interacting with third party systems that often are deliberately trying to keep us away making it a fragile and high-maintenance piece of work.

Closing thoughts

That wrapped up this series. Now that we have seen all six challenges, it became clear that if you do your data acquisition in-house, then you will be managing all six challenges. But if you get your data from data vendors, you will primarily only deal with two types of challenges: cost and quality of their services. This is the reason many companies decide to outsource all or some of their data acquisition process.

It might seem dry and abstract at this point but these are the basic framework and language of data acquisition project management that we will keep coming back to and base many of future discussions on. For example if you are considering launching a data provider service / becoming a data vendor, we can map different customer journeys and product opportunities from these six challenges because they are essentially the pain points of your potential customers.

Next week we will look at this question: What do people use data for? What are they trying to do, really?